Shader "chicai/Circle"

{

Properties {

_Value("Value",float) = 0.5

_Controll("Vector",vector) = (0,0,0,0)

}

SubShader

{

Pass

{

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex:POSITION;

fixed2 uv:TEXCOORD0;

};

struct v2f

{

float4 position:SV_POSITION;

fixed2 uv:TEXCOORD0;

};

float _Value;

half4 _Controll;

v2f vert(appdata IN)

{

v2f o = (v2f)0; //初始化 有些平台要求

o.position = UnityObjectToClipPos(IN.vertex);

o.uv = IN.uv;

return o;

}

float4 frag(v2f IN):SV_TARGET

{

//length(IN.uv.xy-0.5) 中心向四周变化

//step 中心为1 以外为0 变成纯色圆形

//return step(length(IN.uv.xy-0.5), _Value);

//边缘平滑的中心圆计算

return smoothstep(_Controll.x ,_Controll.y, length(IN.uv.xy - 0.5));

}

ENDCG

}

}

}abs(-1.2) //1.2 绝对值 frac(2.5) //0.5 取小数 floor(0.5) //0 向下取整 ceil(0.5) //1 向上取整 max(0.1,0.3) //0.3 取最大值,用来提亮暗部 min(0.1,0.3) //0.1 取最小值,用来降低亮度 pow(0.1,2) //0.01 幂次方 rcp(0.1) //10 倒数 exp(2) //e的2次方 exp2(2) //4 2的2次方 fmod(0.1,0.2) //0 取余 同0.1%0.2 saturate(x) //限制在0到1范围 clamp(x,min,max) //x限制在min到max范围 sqrt(0.01) //0.1 去平方根 rsqrt(0.01) //10 去平方根的倒数 lerp(a,b,t) //a到b的插值 t(0,1) sin(x) //正弦 cos(x) //余弦 distance(a,b) //a和b之间的距离 length(a) //向量a的长度 step(a,b) //a<=b 返回1,否则0 smoothstep(min,max,x) //平滑过渡 x小于mix为0 x大于max则为1,之间为平滑在0到1

//Properties

[Toggle]_DissolveEnable("Dissolve Enable",int) = 0

[MaterialToggle(MASKENABLE)]_MaskEnable("Mask Enable",int) = 0

//Pass

#pragma multi_compile _ _DISSOLVEENABLE_ON //对应_DissolveEnable 无论如何都会编译

//#pragma shader_feature _ _DISSOLVEENABLE_ON ////对应_DissolveEnable 根据使用情况编译

#pragma shader_feature _ MASKENABLE////对应MASKENABLE 根据使用情况编译

#if _DISSOLVEENABLE_ON

#endifUnity中相机的捕获宽高会随着目标视口的宽高比率保持一致,所以不会变形。

可以调整Game视图的比例,观察相机的视锥的改变。

Shader "Unlit/Matcap"

{

Properties

{

_MainTex ("Texture", 2D) = "white" {}

_Matcap("Mapcap",2D) = "white"{}

_MatcapPower("MatcapPower", Float) = 2

_MatcapAdd("MapcapAdd",2D) = "white"{}

_MatcapAddPower("MatcapAddPower", Float) = 2

_RampTex("RampTex",2D) = "white"{}

}

SubShader

{

Tags { "RenderType"="Opaque" }

LOD 100

Pass

{

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex : POSITION;

float2 uv : TEXCOORD0;

float3 normal :NORMAL;

};

struct v2f

{

float2 uv : TEXCOORD0;

float4 vertex : SV_POSITION;

float3 normal_world:TEXCOORD1;

float3 pos_world:TEXCOORD2;

};

sampler2D _MainTex;

float4 _MainTex_ST;

sampler2D _Matcap;

float _MatcapPower;

sampler2D _MatcapAdd;

float _MatcapAddPower;

sampler2D _RampTex;

v2f vert (appdata v)

{

v2f o;

o.vertex = UnityObjectToClipPos(v.vertex);

o.uv = TRANSFORM_TEX(v.uv, _MainTex);

o.normal_world = mul(float4(v.normal,0), unity_WorldToObject);

o.pos_world = mul(unity_ObjectToWorld, v.vertex).xyz;

return o;

}

fixed4 frag (v2f i) : SV_Target

{

//主贴图

float4 tex_color = tex2D(_MainTex,i.uv);

//Matcap 计算采样的uv 使用

float3 normal_world = normalize(i.normal_world);

float3 normal_view = mul(UNITY_MATRIX_V, float4(normal_world,0));

float2 map_cap_uv = (normal_view.xy + float2(1,1)) * 0.5;

float4 mapcap_color = tex2D(_Matcap, map_cap_uv) * _MatcapPower;

//叠加的 Matcap

float4 matcap_add = tex2D(_MatcapAdd, map_cap_uv) * _MatcapAddPower;

//用菲涅尔来采样渐变图 1-0

float3 view_world = normalize(_WorldSpaceCameraPos.xyz - i.pos_world);

half NdotV = saturate(dot(normal_world,view_world));

half sample = 1 - NdotV;

float4 ramp_color = tex2D(_RampTex, float2(sample,sample));

float4 final_color = mapcap_color * tex_color * ramp_color + matcap_add;

return final_color;

}

ENDCG

}

}

}

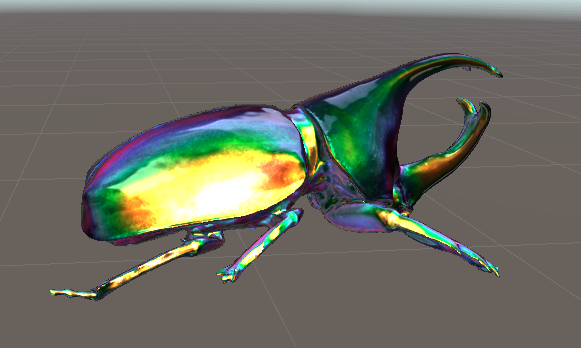

Matcap贴图

Shader "Unlit/Scan"

{

Properties

{

_MainTex ("Texture", 2D) = "white" {}

_RimMin("RimMin", Range(-1,1)) = 0.0

_RimMax("RimMax",Range(0,2)) = 0.0

_InnerColor("Inner Color",Color) = (0.0,0.0,0.0,0.0)

_RimColor("Rim Color",Color) = (0.0,0.0,0.0,0.0)

_RimIntensity("Rim Intensity",Float) = 1.0

_FlowSpeed("Flow Speed",Float) = 1

_Alaph("Alaph", Float) = 0.75

_EmissPower("Emiss Power", Float) = 2

_FlowTex("Flow Texture",2D) = "white"{}//扫光贴图

}

SubShader

{

Tags { "RenderType"="Transparent" }

LOD 100

Pass

{

ZWrite On

ColorMask 0

}

Pass

{

ZWrite Off

Blend SrcAlpha One

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex : POSITION;

float2 texcoord : TEXCOORD0;

float3 normal:TEXCOORD1;

};

struct v2f

{

float4 vertex : SV_POSITION;

float2 uv : TEXCOORD0;

float3 normal_world :TEXCOORD1;

float3 pos_world:TEXCOORD2;

float3 pivot_world:TEXCOORD3;

};

sampler2D _MainTex;

float4 _MainTex_ST;

float _RimMin;

float _RimMax;

float4 _InnerColor;

float4 _RimColor;

float _RimIntensity;

float _FlowSpeed;

sampler2D _FlowTex;

float _Alaph;

float _EmissPower;

v2f vert (appdata v)

{

v2f o;

o.vertex = UnityObjectToClipPos(v.vertex);

o.normal_world = mul(float4(v.normal,0.0), unity_WorldToObject);//世界法线

o.pos_world = mul(unity_ObjectToWorld, v.vertex).xyz;

o.pivot_world = mul(unity_ObjectToWorld, float4(0,0,0,1));//模型中心点的世界坐标

o.uv = v.texcoord;

return o;

}

fixed4 frag (v2f i) : SV_Target

{

//边缘光

half3 normal_world = normalize(i.normal_world);

half3 view_world = normalize(_WorldSpaceCameraPos.xyz - i.pos_world);

half NdotV = saturate(dot(normal_world, view_world));

half fresnel = 1 - NdotV;

fresnel = smoothstep(_RimMin,_RimMax, fresnel);

half emiss = tex2D(_MainTex, i.uv).r;//模型贴图的R通道作自发光

emiss = pow(emiss, _EmissPower);

half final_fresnel = saturate(fresnel + emiss);

half3 final_rim_color = lerp(_InnerColor, _RimColor * _RimIntensity, fresnel);

half final_rim_alpha = final_fresnel;

//扫光 用xy坐标来取值贴图 减去模型中心点不受自身位置影响

half2 uv_flow = (i.pos_world.xy - i.pivot_world.xy);

uv_flow = uv_flow + _Time.y * _FlowSpeed;

float4 flow_rgba = tex2D(_FlowTex, uv_flow);

float3 final_col = final_rim_color + flow_rgba.xyz;

float final_alpha = final_rim_alpha + _Alaph;

return fixed4(final_col,final_alpha);

}

ENDCG

}

}

}

可以用一张渐变图和一张噪声图,一个圆形的模型,通过透明测试制作效果。

Shader "Clip/Bo"

{

Properties

{

_MainTex ("Texture", 2D) = "" {}

_MainColor("Main Color",Color) = (1,1,1,1)

_NoiseMap("NoiseMap", 2D) = "" {}

_Cutout("Cutout", Range(0.0,1.1)) = 0.0

_Speed("Speed", Vector) = (.34, .85, .92, 1)

}

SubShader

{

Tags { "RenderType"="Opaque" "DisableBatching"="True"}

Pass

{

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

struct appdata

{

float4 vertex : POSITION;

half2 texcoord0 : TEXCOORD0;

};

struct v2f

{

float4 pos : SV_POSITION;

float4 uv : TEXCOORD0;

};

sampler2D _MainTex;

float4 _MainTex_ST;

float _Cutout;

float4 _Speed;

sampler2D _NoiseMap;

float4 _NoiseMap_ST;

float4 _MainColor;

//顶点Shader

v2f vert (appdata v)

{

v2f o;

o.pos = UnityObjectToClipPos(v.vertex);

o.uv.xy = TRANSFORM_TEX(v.texcoord0, _MainTex);

o.uv.zw = TRANSFORM_TEX(v.texcoord0, _NoiseMap);

return o;

}

//片元Shader

half4 frag (v2f i) : SV_Target

{

half gradient = tex2D(_MainTex, i.uv.xy + _Time.y * 0.1f * _Speed.xy).r * (1.0 - i.uv.y);

half noise = 1.0 - tex2D(_NoiseMap, i.uv.zw + _Time.y * 0.1f * _Speed.zw).r;

clip(gradient - noise - _Cutout);

return _MainColor;

}

ENDCG

}

}

}

// Unity built-in shader source. Copyright (c) 2016 Unity Technologies. MIT license (see license.txt)

Shader "Custom/FlowLightShader"

{

Properties

{

[PerRendererData] _MainTex ("Sprite Texture", 2D) = "white" {}

_Color ("Tint", Color) = (1,1,1,1)

_StencilComp ("Stencil Comparison", Float) = 8

_Stencil ("Stencil ID", Float) = 0

_StencilOp ("Stencil Operation", Float) = 0

_StencilWriteMask ("Stencil Write Mask", Float) = 255

_StencilReadMask ("Stencil Read Mask", Float) = 255

_ColorMask ("Color Mask", Float) = 15

//ADD---------

lightTime("Light Time", Float) = 0.6

thick("Light Thick", Float) = 0.3

nextTime("Next Light Time", Float) = 2

angle("Light Angle", int) = 45

//ADD---------

[Toggle(UNITY_UI_ALPHACLIP)] _UseUIAlphaClip ("Use Alpha Clip", Float) = 0

}

SubShader

{

Tags

{

"Queue"="Transparent"

"IgnoreProjector"="True"

"RenderType"="Transparent"

"PreviewType"="Plane"

"CanUseSpriteAtlas"="True"

}

Stencil

{

Ref [_Stencil]

Comp [_StencilComp]

Pass [_StencilOp]

ReadMask [_StencilReadMask]

WriteMask [_StencilWriteMask]

}

Cull Off

Lighting Off

ZWrite Off

ZTest [unity_GUIZTestMode]

Blend SrcAlpha OneMinusSrcAlpha

ColorMask [_ColorMask]

Pass

{

Name "Default"

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#pragma target 2.0

#include "UnityCG.cginc"

#include "UnityUI.cginc"

#pragma multi_compile_local _ UNITY_UI_CLIP_RECT

#pragma multi_compile_local _ UNITY_UI_ALPHACLIP

struct appdata_t

{

float4 vertex : POSITION;

float4 color : COLOR;

float2 texcoord : TEXCOORD0;

UNITY_VERTEX_INPUT_INSTANCE_ID

};

struct v2f

{

float4 vertex : SV_POSITION;

fixed4 color : COLOR;

float2 texcoord : TEXCOORD0;

float4 worldPosition : TEXCOORD1;

float4 mask : TEXCOORD2;

UNITY_VERTEX_OUTPUT_STEREO

};

sampler2D _MainTex;

fixed4 _Color;

fixed4 _TextureSampleAdd;

float4 _ClipRect;

float4 _MainTex_ST;

float _UIMaskSoftnessX;

float _UIMaskSoftnessY;

//ADD---------

half lightTime;

int angle;

float thick;

float nextTime;

//ADD---------

v2f vert(appdata_t v)

{

v2f OUT;

UNITY_SETUP_INSTANCE_ID(v);

UNITY_INITIALIZE_VERTEX_OUTPUT_STEREO(OUT);

float4 vPosition = UnityObjectToClipPos(v.vertex);

OUT.worldPosition = v.vertex;

OUT.vertex = vPosition;

float2 pixelSize = vPosition.w;

pixelSize /= float2(1, 1) * abs(mul((float2x2)UNITY_MATRIX_P, _ScreenParams.xy));

float4 clampedRect = clamp(_ClipRect, -2e10, 2e10);

float2 maskUV = (v.vertex.xy - clampedRect.xy) / (clampedRect.zw - clampedRect.xy);

OUT.texcoord = TRANSFORM_TEX(v.texcoord.xy, _MainTex);

OUT.mask = float4(v.vertex.xy * 2 - clampedRect.xy - clampedRect.zw, 0.25 / (0.25 * half2(_UIMaskSoftnessX, _UIMaskSoftnessY) + abs(pixelSize.xy)));

OUT.color = v.color * _Color;

return OUT;

}

fixed4 frag(v2f IN) : SV_Target

{

half4 color = (tex2D(_MainTex, IN.texcoord) + _TextureSampleAdd);

//ADD---------

fixed currentTimePassed = fmod(_Time.y, lightTime + nextTime);

fixed x = currentTimePassed / lightTime;//0-1为扫光时间 >1为等待时间

fixed tanx = tan(0.0174*angle);// uv-y/uv-x 0.0174为3.14/180

x += (x-1)/tanx;//保证能从头扫到尾

fixed x1 = IN.texcoord.y /tanx + x;//用uv-y来计算倾斜的uv-x

fixed x2 = x1 + thick;//范围

if(IN.texcoord.x > x1 && IN.texcoord.x < x2)

{

fixed dis = abs(IN.texcoord.x-(x1+x2)/2);//距离扫光当前x中心点的距离

dis = 1-dis*2/thick;//越中心越大

half ca = color.a;

color += color*(1.4*dis);//颜色加强

color.a = ca;

}

//ADD---------

#ifdef UNITY_UI_CLIP_RECT

half2 m = saturate((_ClipRect.zw - _ClipRect.xy - abs(IN.mask.xy)) * IN.mask.zw);

color.a *= m.x * m.y;

#endif

#ifdef UNITY_UI_ALPHACLIP

clip (color.a - 0.001);

#endif

return color;

}

ENDCG

}

}

}在官网对应的unity版本下下载build in shaders,找到UI-Default.shader,添加标志了ADD---------的代码,创建新的材质,赋值给Image的材质就可以了。

Shader "Custom/RubCard" {

Properties {

_Color ("Main Color", Color) = (1,1,1,1)//Tint Color

_MainTex ("Base (RGB)", 2D) = "white" {}

_MainTex_2 ("Base (RGB)", 2D) = "white" {}

ratioY ("ratioY", Float) = 0

radius ("radius", Float) = 0

height ("height", Float) = 0

anchorY ("anchorY", Float) = 0

rubOffset ("rubOffset", Float) = 0

maxRubAngle ("maxRubAngle", Float) = 0

_ZOffsetOne("ZOffsetOne",Float)=0

_ZOffsetTwo("ZOffsetTwo",Float)=0

_Move("Move",Float)=0

}

SubShader {

Tags {"Queue"="Geometry" "RenderType"="Opaque" }

LOD 100

Pass {

Name "ONE"

Tags{"LightMode" = "UniversalForward"}

Cull Front

Lighting Off

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

#include "Lighting.cginc"

sampler2D _MainTex;

float4 _MainTex_ST;

fixed4 _Color;

float ratioY;

float radius;

float height;

float anchorY;

float rubOffset;

float maxRubAngle;

float _ZOffsetOne;

float _Move;

struct appdata

{

float4 vertex : POSITION;

float2 uv : TEXCOORD0;

};

struct v2f

{

float2 uv : TEXCOORD0;

float4 vertex : SV_POSITION;

};

v2f vert (appdata v)

{

v2f o;

float4 tmp_pos = v.vertex;

float tempAngle = 1.0;

tmp_pos.xyz = mul(unity_ObjectToWorld,float4(tmp_pos.xyz,0)).xyz;

float pi = 3.14159;

float halfPeri = radius * pi * maxRubAngle;

float rubLenght = height * (ratioY - anchorY);

tmp_pos.y += height*rubOffset;

float tempPosY = tmp_pos.y;

if(tmp_pos.y < rubLenght)

{

float dy = (rubLenght - tmp_pos.y);

if(rubLenght - halfPeri < tmp_pos.y)

{

float angle = dy/radius;

tmp_pos.y = rubLenght - sin(angle)*radius;

tmp_pos.z = radius * (1.0- cos(angle));

}

else

{

float tempY = rubLenght - halfPeri - tmp_pos.y;

float tempAngle = pi * (1.0-maxRubAngle);

tmp_pos.y = rubLenght - radius*sin(tempAngle) + tempY * cos(tempAngle);

tmp_pos.z = radius + radius*cos(tempAngle) + tempY * sin(tempAngle);

}

}

tmp_pos.x += unity_ObjectToWorld[3][0];

tmp_pos.y += unity_ObjectToWorld[3][1];

tmp_pos.z += unity_ObjectToWorld[3][2];

float4 pos = mul(UNITY_MATRIX_VP, tmp_pos);

float tempOffset = _ZOffsetOne * pos.w;

if (tempPosY < rubLenght )

{

float dy = (rubLenght - tempPosY);

if( dy > pi * radius)

{

tempOffset = -tempOffset*2.0;

}

}

pos.z = pos.z + tempOffset;

pos.x += _Move;

o.vertex = pos;

o.uv = TRANSFORM_TEX(v.uv, _MainTex);

o.uv.x = 1 - o.uv.x;//对uv的x取反

return o;

}

fixed4 frag (v2f i) : SV_Target

{

fixed4 col = tex2D(_MainTex, i.uv);

clip (col.a - 0.5);

return col * _Color;

}

ENDCG

}

Pass

{

Name "TWO"

Cull Back

Lighting Off

CGPROGRAM

#pragma vertex vert

#pragma fragment frag

#include "UnityCG.cginc"

#include "Lighting.cginc"

sampler2D _MainTex_2;

float4 _MainTex_2_ST;

fixed4 _Color;

float ratioY;

float radius;

float height;

float anchorY;

float rubOffset;

float maxRubAngle;

float _ZOffsetTwo;

float _Move;

struct appdata

{

float4 vertex : POSITION;

float2 uv : TEXCOORD0;

};

struct v2f

{

float2 uv : TEXCOORD0;

float4 vertex : SV_POSITION;

};

v2f vert (appdata v)

{

v2f o;

float4 tmp_pos = v.vertex;

float tempAngle = 1.0;

tmp_pos.xyz = mul(unity_ObjectToWorld,float4(tmp_pos.xyz,0)).xyz;

//tmp_pos.xyz = (modelMatrix * vec4(tmp_pos.xyz, 0.0)).xyz;

float pi = 3.14159;

float halfPeri = radius * pi * maxRubAngle;

float rubLenght = height * (ratioY - anchorY);

tmp_pos.y += height*rubOffset;

float tempPosY = tmp_pos.y;

if(tmp_pos.y < rubLenght)

{

float dy = (rubLenght - tmp_pos.y);

if(rubLenght - halfPeri < tmp_pos.y)

{

float angle = dy/radius;

tmp_pos.y = rubLenght - sin(angle)*radius;

tmp_pos.z = radius * (1.0- cos(angle));

}

else

{

float tempY = rubLenght - halfPeri - tmp_pos.y;

float tempAngle = pi * (1.0-maxRubAngle);

tmp_pos.y = rubLenght - radius*sin(tempAngle) + tempY * cos(tempAngle);

tmp_pos.z = radius + radius*cos(tempAngle) + tempY * sin(tempAngle);

}

}

tmp_pos.x += unity_ObjectToWorld[3][0];

tmp_pos.y += unity_ObjectToWorld[3][1];

tmp_pos.z += unity_ObjectToWorld[3][2];

float4 pos = mul(UNITY_MATRIX_VP, tmp_pos);

float tempOffset = _ZOffsetTwo * pos.w;

if (tempPosY < rubLenght )

{

float dy = (rubLenght - tempPosY);

if( dy > pi* radius)

{

tempOffset = -tempOffset * 2.0;

}

}

pos.z = pos.z + tempOffset;

pos.x += _Move;

o.vertex = pos;

o.uv = TRANSFORM_TEX(v.uv, _MainTex_2);

o.uv.x = 1 - o.uv.x;//对uv的x取反

o.uv.y = 1 - o.uv.y;//对uv的y取反

return o;

}

fixed4 frag (v2f i) : SV_Target

{

fixed4 col = tex2D(_MainTex_2, i.uv);

clip (col.a - 0.5);

return col * _Color;

}

ENDCG

}

}

}注意:URP要渲染多通道的shader,需要确保有个pass打上UniversalForward的tag,其余pass有SRPDefaultUnlit的tag也行,没有也行

shader将模型坐标转换为世界坐标,所以普通的移动不会影响位置,需要调节shader的参数做偏移,调节参数把牌摆好,设置好角度和相机角度。